Your visitors already know why they're not buying. Now you will too.

You're spending on traffic. Something on your page is stopping the sale. You don't know what. Here's a ranked list of exactly what it is, specific enough to hand to your developer with no briefing needed.

Pick your tier. Provide your URL. Get your report.

How much is your conversion rate costing you right now?

Move the sliders. See your number.

From URL to actionable report in 3 steps

Tell us what to look at

Buyer View: one page URL. No account, no setup, no traffic needed.

Buyer Click: your landing page + up to 3 ad sets (image, copy, headline). We score the ads and check if your page delivers what they promised.

Buyer Journey: your entry URL + ad sets. We trace the full path your buyers take from ad to checkout.

All tiers: add one sentence about your audience and we generate buyer personas to match.

Buyers browse your site

Each persona evaluates your page independently — the way a real prospect would. Then they argue. High scores get challenged for hidden weaknesses. Low scores get defended for overlooked strengths. What survives is what actually matters for your conversion rate.

For Buyer Click and Buyer Journey, we also score your ad creatives against your landing pages: what buyers were promised vs. what they found.

Get a report you can act on

A plain-language summary tells you what works, what to fix first, and how your visitors reacted — written for someone who runs a business, not a testing lab.

Behind it: the full technical analysis with specific scores for every area of your page, plus effort-tagged recommendations your developer can start on immediately.

Why you can trust these numbers and act on them

Standard AI audits are not grounded in reality, facts, or best practices. This approach has been scientifically proven to match human experts only 26-39% of the time. 2 to 4 out of 10. BuyerEyes focuses on your buyer perspectives and expert judgment based on proven best practices.

Scored against real examples, not AI guesswork

Your score isn't a number an AI made up. The system describes your page the way your customers would — in plain language. Then it compares that description against 150 real examples of what good and bad actually look like. Your customers' eyes. Not an AI's guess. Full methodology

3 rounds where the system questions itself

The system questions itself. Each buyer provides 2 opinions for each page. Every score above 7.0 gets challenged for hidden weaknesses. Every score below 4.0 gets defended for overlooked strengths. You get a finding that went through an x-ray of multiple buyers.

Specific enough to hand to your developer

Every finding comes with a reason. "Your buy button is hard to find on mobile" — here's why and what to change. "Your headline works" — here's what not to break. Your developer or designer knows exactly where to go. No briefing needed.

Every finding tells you whether to act or look closer

Some findings are certain: "Fix this now." Others need a second look: "This might be a problem — verify before changing." The report is honest about what it knows and what needs more investigation. It never invents a recommendation to fill a gap.

Every page is tested against 6 questions your buyer actually asks:

- Who is this for?

- What do I get?

- Why should I believe you?

- When do I get it?

- Where do I go next?

- How does it work?

And a harder test: does any element on the page say something no buyer ever wanted to read?

"I always wanted to read about how long it took you to come up with the product name."

...said no buyer ever.

Six areas. Each one that stands between your visitor and your checkout.

Your report covers six areas that decide whether a visitor buys or leaves. Each one comes with specific findings your team can fix — and the report tells you which fixes matter most.

Visual design

Is your page clear at a glance, or do visitors work to understand it?

Copy & messaging

Does your copy speak to buyers, or talk about yourself?

CTA effectiveness

Are your buttons working as hard as the rest of your page?

Trust & credibility

Would a stranger trust your page enough to enter payment details?

Technical experience

Does your technology help buyers, or get in their way?

Purchase intent

Would real buyers actually buy from your page?

What a BuyerEyes audit actually looks like

Scores and insights from 4 real audits. Each report surfaces the specific conflict between what a website does well and what holds it back.

above the fold

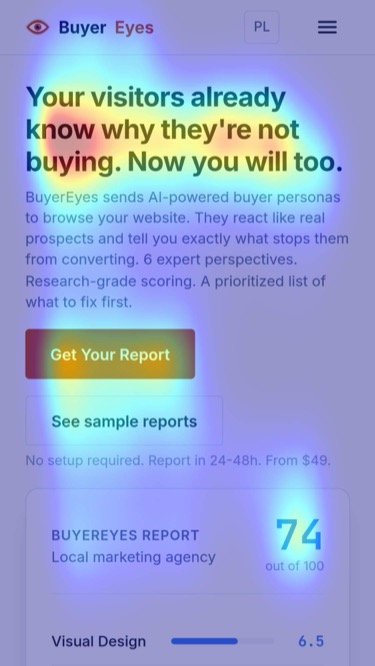

above the foldWhere do visitors actually look?

Every report includes a visual attention heatmap generated from a single screenshot. No traffic required, no panel recruitment. Hotjar needs 2,000 visits to show you a heatmap. All BuyerEyes needs is a screenshot, taken and analyzed automatically.

Validated on 640 web pages against real eye-tracking data. Shows whether your hero, CTA, and key messaging sit inside the attention hotspots or get ignored.

A differentiated value proposition targeting desk workers and injury recovery. The site rejected the typical gym-bro culture for a "movement craftsman" persona. Copy scored 8.0 with highly effective messaging.

But the technical layer told a different story. Load times and form friction created a gap between what the page promised and how it felt to use.

Top recommendations

- Low effort Fix hero headline to immediately communicate the desk-to-movement value proposition

- Low effort Clean up duplicate review content that dilutes social proof

- Medium effort Reduce form friction to match the quality of the copy

A direct-response lead-gen page using the PAS framework. The copy targeted a specific pain point: businesses experiencing a "referral drought." Messaging scored 8.4, the highest in our sample.

The gap appeared in trust signals. Claims about a portal network lacked verification, and the brand felt impersonal despite strong messaging.

Top recommendations

- Medium effort Verify portal network claims with concrete data or remove them

- Low effort Optimize hero CTA prominence to match the quality of surrounding copy

- Medium effort Humanize the brand with team photos or founder story

Vetresor offers veterinary care in Poland for Swedish pet owners, with savings around 80%. A strong message backed by the founder's personal story. But the page didn't let that story breathe.

An oversized logo consumed the viewport. CTAs were buried below the fold. The form had usability issues that created friction at the exact moment trust was needed most.

Top recommendations

- Low effort Fix form usability to reduce abandonment at the conversion point

- Medium effort Optimize hero hierarchy: reduce logo size, move CTA above the fold

- Medium effort Bridge credibility gap by naming partner clinics and surgeon credentials

A clean B2B lead-gen page leveraging a strong founder brand. The "Day in the Life" schedule was a standout: it demystified AI adoption by showing concrete daily usage instead of abstract promises.

The report flagged a monetization gap. Paid programs were hidden inside the FAQ section, invisible to buyers who were ready to spend. Mobile form friction added unnecessary resistance to the free starter flow.

Top recommendations

- Low effort Fix mobile form friction to reduce drop-off on the free starter kit signup

- Medium effort Ground social proof with real names and outcomes instead of anonymous metrics

- Medium effort Surface paid programs from FAQ into a dedicated pricing or programs section

Your review section: trust signal or red flag?

BuyerEyes checks your review section not just for presence, but for signs that something looks off: did all the reviews arrive in the same week? Are the ratings suspiciously uniform? Does every review sound like the same person wrote it? Buyers notice when a review section looks manufactured. The audit tells you if yours does.

Example from a real audit (anonymized): "23 of 47 reviews arrived in an 11-day window. That pattern looks manufactured. A skeptical first-time buyer will notice."

The methodology behind the scores

Built by Kamil Andrusz

30+ years in Internet infrastructure. From Linux/Unix systems in 1995 through security consulting for Lufthansa, telecom project management for Nokia (served millions of people!), to WooCommerce performance and conversion rate optimization and custom AI solutions. Master of Law. Certified Scrum Master. Likes facts, data and science. That's why the system behind BuyerEyes is built on published research and tested against real human expert judgments — to make your AI buyers act like human buyers.

What our customers found in their reports

Honestly? I thought my site was doing its job. It loads fast, it looks clean, the story is there. So when BuyerEyes scored it 42 out of 100, my first reaction was "no way." Then I read the persona analysis, five simulated visitors, each with their own doubts and hesitations, and it clicked. People were landing on my page, getting interested, and then... bookmarking it. Not contacting me. Because my contact form was buried, my headline didn't explain what I actually do fast enough, and there was no clear next step on mobile. These aren't things you see when you stare at your own site every day. I needed an outside perspective, and BuyerEyes gave me exactly that.

We test what we sell. Here are our own results.

Before asking you to trust BuyerEyes, we pointed it at our own site. Every score, every finding, every fix — published.

Score: 68/100. Trust: 4.5 out of 10 — and we know exactly why. The audit found missing contact info, no visible social proof, and three competing CTAs above the fold. Copy scored 9.5, but the page didn't feel safe to buy from. That's the point: this tool finds what you wouldn't see yourself.

We published the full report — every finding, every score, every persona reaction — and started fixing. Four rounds of changes so far, all documented.

Want your site to be our next case study?

We'll audit your page and publish the results (with your permission). Full report, zero cost for the first 3 stores.

hello@buyereyes.aiOne page. One campaign. Your whole funnel.

Pick where you need clarity. Every tier tells you what's working, what's not, and what to fix first — in plain language you can act on today.

- 5 simulated buyers review your page

- 1 page analyzed in depth

- Visual attention map — see where visitors actually look

- Detailed breakdown of what works and what to fix first

- Prioritized fix list ranked by effort and impact

- Plain-language summary you can act on today

- Full technical report included

One-time payment. Report in ~24 hours.

- 5 simulated buyers evaluate your landing page

- Up to 3 ad sets reviewed (image, copy, headline)

- Are your ads convincing? Score for each creative

- Does your page deliver what the ad promised? We check

- Visual attention map for ads and page

- Custom audience brief

- Plain-language verdict: where the disconnect is

- Competitor benchmark — see how you stack up against a rival page

- Full technical report included

One-time payment. Report in ~48 hours.

- 10 simulated buyers across your full funnel

- Up to 10 pages analyzed end-to-end

- Up to 5 ad sets reviewed

- Find where buyers drop off between steps

- Full walkthrough of your buyer's path

- Ad-to-page match check for each creative

- Complete priority roadmap: what to fix and in what order

- Competitor benchmark on your landing page

- Plain-language summary + full technical report

One-time payment. Full funnel, start to finish.

A CRO agency charges $2,000-5,000+ for one analyst's opinion over 2-4 weeks. You get multiple AI reviewers that challenge each other's findings, a detailed breakdown of every area of your page, and a plain-language summary your team can act on the same day. 24-72 hours depending on tier, starting at $49.

Who is this NOT for?

You don't need your site to convert.

You prefer burning ad budget and hoping the landing page figures itself out. Fair enough. We're not for you.

You collect reports. You don't act on them.

BuyerEyes gives you a ranked list of what to fix. If that list is going to sit in your Downloads folder, save your $49.

You want someone to fix it for you.

BuyerEyes is a diagnostic tool. It tells you what's broken and in what order to fix it. The fixing part? That's on you and your team.

Before you buy

Every day without data is a day of guessing.

See what your buyers see. Find out what's stopping them from buying. Get a prioritized fix list your team can act on today. Report in 24-48h.

Get Your ReportFrom $49. Results in 24-72h. No account needed.